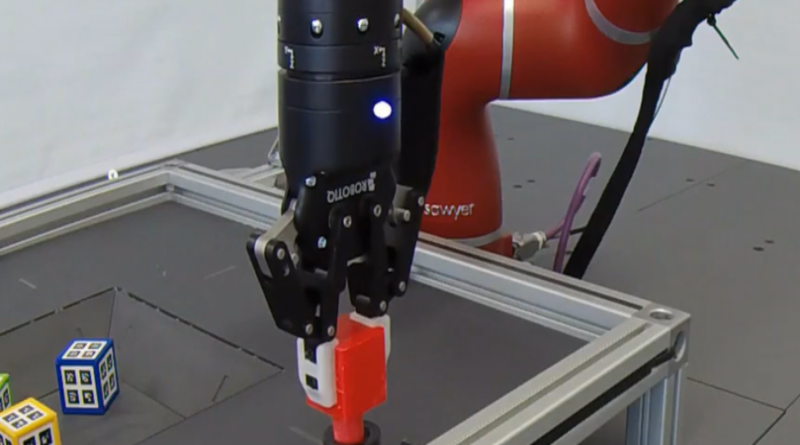

DeepMind researchers introduce hybrid solution to robot control problems

Fundamental problems in robotics involve both discrete variables, like the choice of control modes or gear switching, and continuous variables, like velocity setpoints and control gains. They’re often difficult to tackle, because it’s not always obvious which algorithms or control policies might best fit. That’s why researchers at Google parent company Alphabet’s DeepMind recently proposed a technique — continuous-discrete hybrid learning — that optimizes for discrete and continuous actions simultaneously, treating hybrid problems in their native form.

A paper published on the preprint server Arxiv.org details their work, which was accepted to the 3rd Conference on Robot Learning, held in Osaka, Japan in October 2019. “Many state-of-the-art … approaches have been optimized to work well with either discrete or continuous action spaces but can rarely handle both … or perform better in one parameterization than another,” the coauthors wrote. “Being able to handle both discrete and continuous actions robustly with the same algorithm allows us to choose the most natural solution strategy for any given problem rather than letting algorithmic convenience dictate this choice.”